containerd is probably the most widely deployed container runtime on the planet, and at the same time one of the least understood. When Kubernetes 1.20 announced the deprecation of dockershim in December 2020, and when Kubernetes 1.24 finalized its removal in May 2022, thousands of clusters ended up running containerd as their default runtime. Few operators noticed, which is precisely the signal that the migration went well: a properly integrated runtime is supposed to be invisible. This article makes it visible for a while, just enough to understand what actually runs underneath each pod.

What containerd Is and Isn’t

containerd is a high-level container runtime. Its responsibility is the full lifecycle of a container on a single machine: pulling images from a registry, storing them, creating a container from an image, starting, pausing and stopping it, mounting filesystem snapshots, and coordinating network namespaces with whatever CNI plugin the node has configured. All of that happens inside a daemon, without a GUI and without developer-experience niceties. It does not build images (it has no Dockerfile concept), it does not orchestrate (that is Kubernetes’ job on top), it does not compose multiple containers, and it does not try to replace an interactive tool like the Docker CLI.

containerd was originally an internal component of Docker Engine. In March 2017 it was donated to the CNCF; in February 2019 it reached graduated status, the label the CNCF reserves for pieces considered mature and well governed. Since then it has quietly become the common substrate on which Docker, Kubernetes, BuildKit and a long list of other tools build.

The Layered Stack

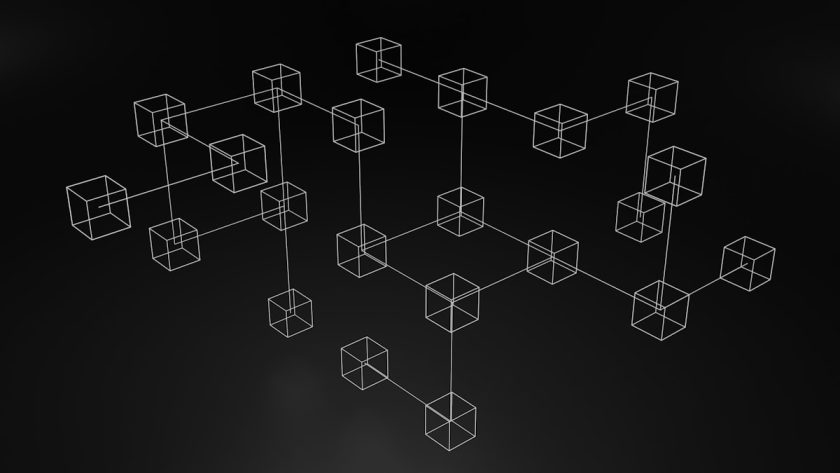

Understanding containerd means looking at the full stack that runs a container on Linux. At the top, Kubernetes orchestrates and decides which pods go to which nodes. It talks down to containerd over CRI, the Container Runtime Interface, a gRPC API Kubernetes defined to decouple itself from any specific runtime. containerd in turn talks to a low-level runtime following the OCI specification: usually runc, the reference implementation, which actually asks the kernel to create namespaces, wire up cgroups, and apply capabilities and seccomp profiles.

This layering is what lets pieces be swapped without breaking anything above them. You can replace runc with crun (the C reimplementation), with gVisor (a userspace sandbox for strong multi-tenant isolation), or with Kata Containers (a microVM per container), and Kubernetes neither knows nor cares. You can replace containerd with CRI-O with little more than a kubelet flag. CRI and OCI are the two contracts that make all of this possible.

Two concepts inside containerd are worth naming. containerd namespaces are not kernel namespaces: they are logical partitions that separate images and containers belonging to different consumers. Kubernetes uses k8s.io by convention; Docker uses moby. Querying the daemon without specifying a namespace makes it look empty on a node packed with pods. Snapshotters are the components that mount container filesystems as overlays: the default is overlayfs, and alternatives (native, btrfs, zfs, stargz for lazy pulling) are selected in the daemon’s configuration.

containerd and Docker: The Real Relationship

The simple truth is that containerd and Docker have been the same thing underneath for years. Docker Engine has used containerd internally since 2017; when you type docker run, Docker builds the OCI spec, calls containerd over a local socket, and containerd invokes runc. The command is a convenient abstraction sitting on top of the real runtime. That is why Kubernetes clusters said to “use Docker” were already running their containers through containerd; the dockershim was nothing but a translator between CRI and the Docker Engine API.

Removing dockershim in Kubernetes 1.24 eliminated that translation layer. Kubernetes now talks directly to containerd (or CRI-O, or any other CRI runtime) without routing through Docker Engine. For a cluster the change amounted to reconfiguring kubelet and restarting nodes. For a standalone server where you develop with Docker, nothing changes: Docker Engine remains valid, because it is Docker plus containerd plus surrounding tooling (compose, buildkit, CLI).

containerd vs CRI-O

CRI-O is the main alternative. It was born at Red Hat with an explicit goal: a minimal runtime built exclusively for Kubernetes, with nothing that CRI does not require. containerd is more general: Docker uses it, development environments use it, BuildKit uses it, Kubernetes uses it. Both implement CRI and OCI, both use runc by default, and their performance is comparable in serious benchmarks. Choosing between them is more a question of ecosystem and commercial support than of capability: containerd dominates upstream Kubernetes and cloud distributions (EKS, GKE, AKS have all moved), while CRI-O is standard in OpenShift and much of the Red Hat ecosystem.

Operating containerd Directly

Day to day with Kubernetes you never touch containerd: kubelet handles it. When something breaks on a node and kubectl describe stops being enough, knowing how to work one layer below turns a multi-hour incident into a short one. Three tools are worth having at hand. ctr, the native CLI that ships with containerd, is raw and unfriendly but lets you see exactly what the daemon holds. crictl is the official Kubernetes project tool for debugging CRI runtimes: it speaks to containerd through the CRI socket and offers pod- and container-oriented subcommands; its configuration lives in /etc/crictl.yaml and points at unix:///run/containerd/containerd.sock. nerdctl is a CLI that is API-compatible with Docker but talks to containerd directly, perfect for anyone coming from Docker who wants to keep the muscle memory intact.

# Typical debug from a Kubernetes node running containerd

sudo crictl pods --namespace kube-system

sudo crictl ps -a

sudo crictl logs --tail 200 <container-id>

sudo crictl exec -it <container-id> sh

sudo crictl inspecti registry.k8s.io/pause:3.9

# If you miss Docker syntax

sudo nerdctl --namespace k8s.io ps

sudo nerdctl --namespace k8s.io images

# And to watch the daemon itself

sudo journalctl -u containerd -fThe cases where this pays off are specific: a pod that refuses to start and whose kubelet logs do not explain why (containerd often has the detail in journalctl -u containerd); a node with a full disk that needs crictl rmi --prune because the Kubernetes GC is falling behind; an ImagePullBackOff that a direct crictl pull resolves by isolating whether the problem is credentials or networking; or auditing which runtime each node runs with kubectl get nodes -o wide.

containerd’s main configuration file lives at /etc/containerd/config.toml. Common edits are adjusting registry mirrors to avoid saturating Docker Hub, configuring alternative runtimes such as gVisor for sensitive workloads, choosing a snapshotter, and above all setting the cgroup driver to systemd so that it matches kubelet. That last one is a classic installation mistake: kubelet on systemd and containerd on cgroupfs produces mysterious pod restarts that are painful to diagnose. Any change requires systemctl restart containerd and, in production, doing it node by node with cordon and drain.

Closing

containerd is the quiet piece on which most of Kubernetes runs in 2023. Understanding its architecture is not academic vanity: it is what turns an opaque incident into a diagnosable one, what lets you make informed decisions about alternative runtimes when security or isolation demand them, and what lets you read release notes about CRI, snapshotters or shims with actual comprehension. Under ordinary operation it will remain a layer you do not descend into; the day you have to, you need to know the route.